SASRA: Semantically-aware Spatio-temporal Reasoning Agent for Vision-and-Language Navigation in Continuous Environments

SRI International has developed a new learning-based approach to enable the mobile robot to resemble human capabilities in semantic understanding. The robot can employ semantic scene structures to reason about the world and pay particular attention to relevant semantic landmarks to develop navigation strategies. The robot can also efficiently learn from past experiences to explore new environments, such as discovering common semantic scene entities that can be generalized to similar but previously unseen places.

This approach can be adapted to a variety of applications. SRI has developed novel deep reinforcement learning (DRL) techniques to incorporate real-time semantic scene information for improving learning-based autonomous navigation in new unknown environments. DRL techniques have the potential to outperform the classical solutions; however, they come at a significantly increased computation load. By encoding explicit scene semantics into a map [3] or a graph representation [2], SRI’s approach performs better or comparable results to the existing learning-based solutions but under a clear time or computational budget.

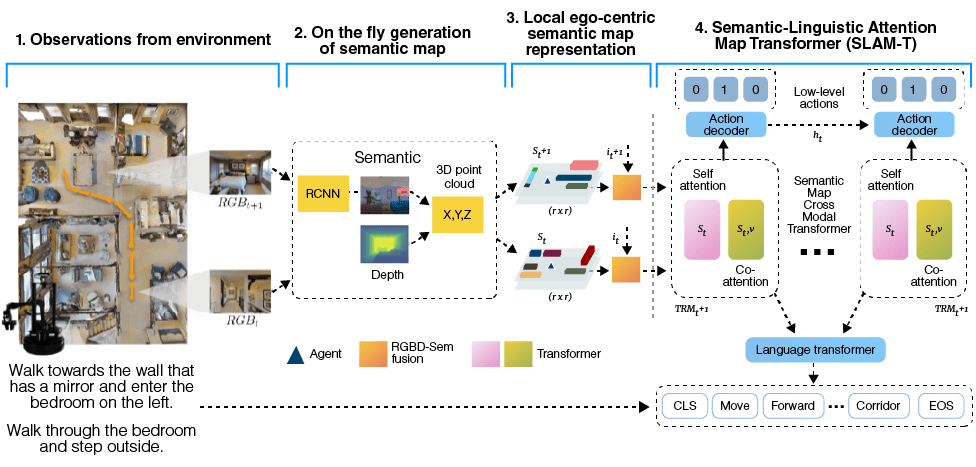

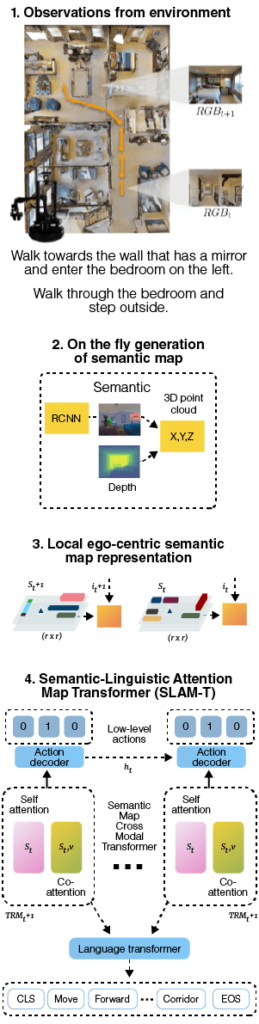

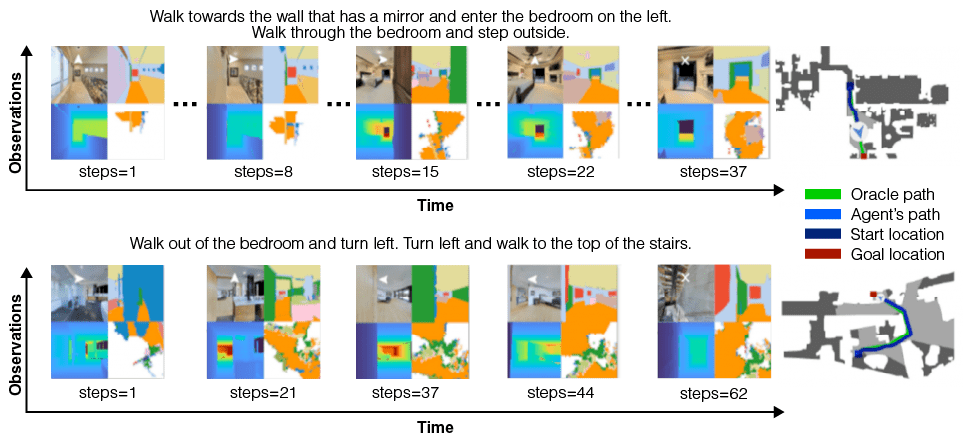

SRI has utilized this approach to create a new vision-and-language navigation (VLN) framework [1] called SASRA (Semantically-Aware Spatio-temporal Reasoning Agent). VLN requires an autonomous robot to follow natural language instructions from humans in unseen environments. Existing learning-based methods struggle as they primarily focus on raw visual observation and lack semantic reasoning capabilities that are crucial in generalizing to new environments. To overcome these limitations, SRI developed a temporal memory by building a dynamic semantic map and performing cross-modal grounding to align map and language modalities, enabling more effective VLN results.