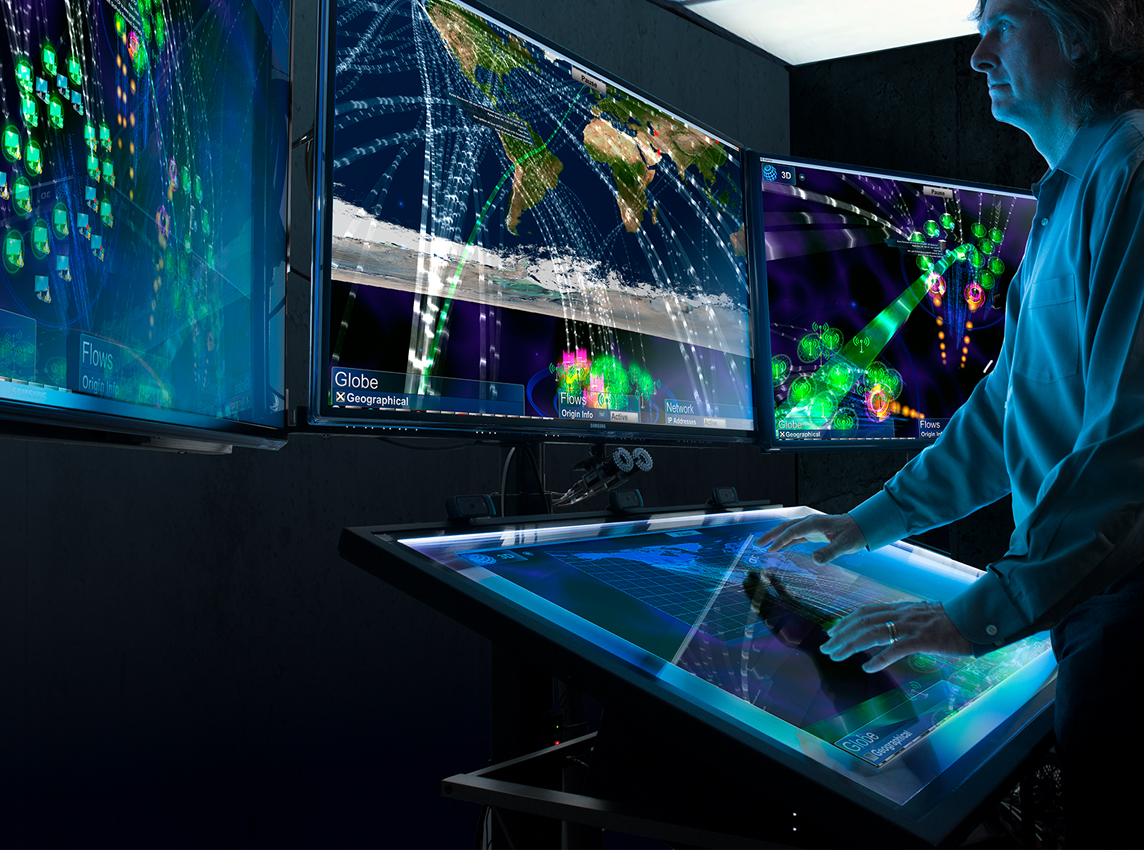

Computer science lab

Building, assessing, and defending the vital computer systems that affect our lives

We study the logical foundations of scalable systems beyond the scope of traditional testing or simulation, and we create and apply high-level tools for rigorous mechanical analysis.

Focus areas

The Computer Science Lab develops leading-edge tools and methods for areas including computer security, high-assurance systems, advanced user interfaces, computer networking, robotics, biotechnology, and nanotechnology. We study the logical foundations of scalable systems beyond the scope of traditional testing or simulation, and we create and apply high-level tools for rigorous mechanical analysis.

Real-world impact

Latest publications

-

Deductive Synthesis of the Unification Algorithm: The Automation of Introspection

We are working to create the first automatic deductive synthesis of a unification algorithm. The program is extracted from a proof of the existence of an output substitution that satisfies…

-

Semantic Instrumentation of Virtual Environments for Training

We discuss an approach in which the virtual environment is semantically instrumented in order to allow for the tracking of and reasoning about open-ended learner activity therein.

-

Diagnosis cloud: Sharing knowledge across cellular networks

This paper presents a novel diagnosis cloud framework that enables the extraction and transfer of knowledge from one network to another. It also presents use cases and requirements. We present…

Computer Science Lab leadership

-

William Mark

Senior Technology Advisor, Commercialization

Featured researchers

-

Ulf Lindqvist

Senior Technical Director, Computer Science Lab

-

Peter Neumann

Principal Scientist, Computer Science Lab